ADELPHI, Md. — Multidomain operations, the Army’s future operating concept, require autonomous agents with learning components to operate alongside the Warfighter. New Army research reduces the unpredictability of current training reinforcement learning policies so that they are more practically applicable to physical systems, especially ground robots.

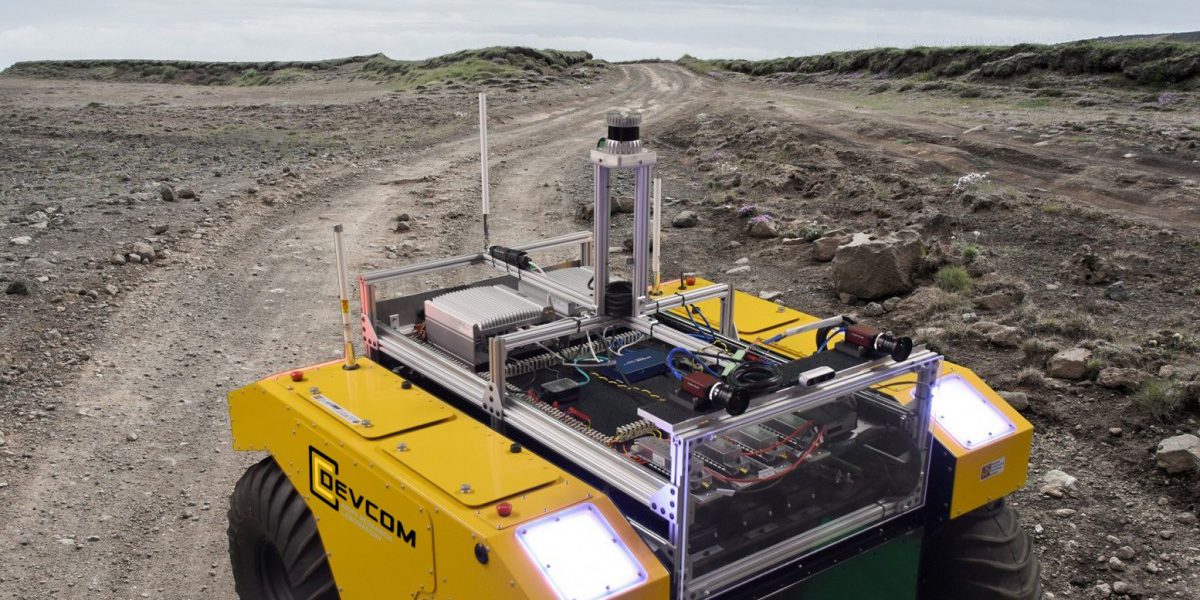

“These learning components will permit autonomous agents to reason and adapt to changing battlefield conditions,” said Army researcher Dr. Alec Koppel from the U.S. Army Combat Capabilities Development Command’s (now known as DEVCOM) Army Research Laboratory.

“The underlying adaptation and replanning mechanism consists of reinforcement learning-based policies. Making these policies efficiently obtainable is critical to making the MDO operating concept a reality,” he said.

According to Koppel, policy gradient methods in reinforcement learning are the foundation for scalable algorithms for continuous spaces, but existing techniques cannot incorporate broader decision-making goals such as risk sensitivity, safety constraints, exploration, and divergence to a prior.

“Designing autonomous behaviors when the relationship between dynamics and goals are complex may be addressed with reinforcement learning, which has gained attention recently for solving previously intractable tasks such as strategy games like Go, chess, and videogames such as Atari and Starcraft II,” Koppel said.

“Prevailing practice, unfortunately, demands astronomical sample complexity, such as thousands of years of simulated gameplay,” he said.

This sample complexity renders many common training mechanisms inapplicable to data-starved settings required by MDO context for the Next-Generation Combat Vehicle, or NGCV.