INTRODUCTION

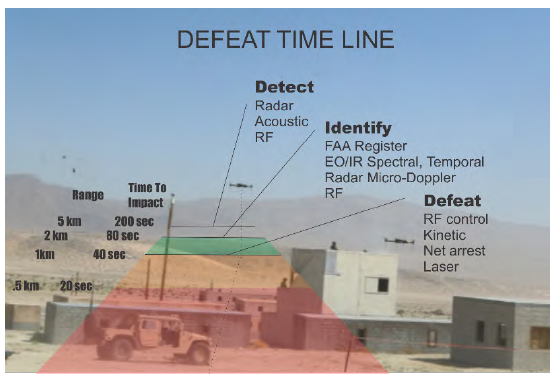

The recent emergence of small unmanned aerial systems (sUAS) into a broad sphere of commercialized applications has caused proliferation of easily, accessible platforms that can be operated and modified with relatively little training. Because they are cheap, effective, and disposable, sUAS are an attractive option for state and nonstate actors alike to conduct surveillance or directly apply force. They are threatening because they are small and fast. If an sUAS payload poses a direct threat, the timeline to neutralize it is critical (Figure 1). This timeline is extremely severe, requiring defeat of an sUAS that will be effective within 40 s from 1 km out. Consequently, the military, intelligence community, and security firms have been working on methods to counter the unmanned aerial vehicle (UAV) threat, with many initiatives launched in the United States and overseas. This article will focus on detecting and classifying UAS threats, with a brief overview of mitigation or kill solutions.

Figure 1: Defeat Timeline (Source: QinetiQ).

DETECTION AND CLASSIFICATION PROBLEMS

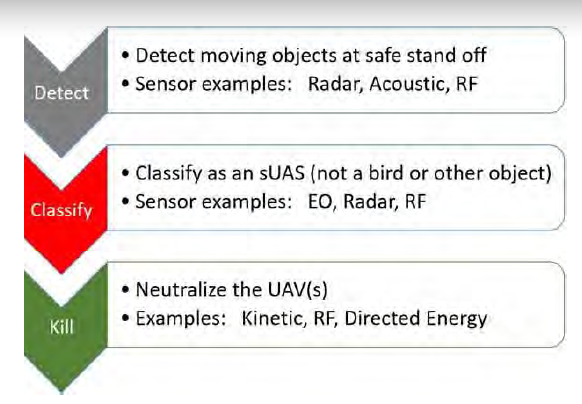

Detection and classification of an sUAS threat to successfully engage a kill solution has two primary problems. The first is detection and classification of a class of very small objects that may move at very fast or slow speeds (or hover). sUAS may present different characteristics in their phenomenology because they can be different types (e.g., rotary or fixed wing, with a wide range of material structures, optical emissions, reflectivity characteristics, and radar cross sections [RCS]). Their variability across size and profiles means that, generally, no single system addresses the whole problem from detection to neutralization but rather a system of systems is required to address the necessary tasks (see Figure 2). The elements in the detection and classification parts of this system are guided by the key detectable elements of an sUAS—shape, size, material structure, velocity, communication signals, and high-frequency propeller and rotor blade movement/acoustics.

Figure 2: Top-Level Sequence of Events Necessary for Prosecuting Counter-UAV Missions (Source: QinetiQ).

DETECTION

As opposed to classification, detection refers simply to establishing that an object is present as distinct from its background and surroundings. UAS detection methods consist of those modalities likely to discriminate a UAS from its background but not necessarily, specifically classify it apart from similar objects. The typical multisensor approach is to use one wide-area modality to detect a possible UAS and then have that sensor cue an additional, narrower field-of-view (FOV) asset to examine the possible UAS and classify it from other similar objects (e.g., birds) or other confusers and signal noise.

Radar

The primary detection modality against sUAS is radar because of its range and sensitivity detection capabilities in all-weather conditions. Generally, radar systems have coarse resolution and cannot fully profile possible targets for classification and location with sufficient precision for targeting. Low-speed, slow Class 1/Class 2 UAS would be missed by the same radar systems that track larger class airframes at longer distances. Radar signals are also subject to obscuration and clutter by terrain features in settings such as forest and urban environments. Low-cost, low-SWAP active electronic scanned array (AESA), or staring array antennas, or three-dimensional (3-D) radars have been developed that serve the counter-UAS (C-UAS) mission well. Multiple AESA panels or 3-D radars can be structured to cover full 360° FOV in the entire upper hemisphere. These radars can detect sUAS at ranges of 1–3 km based on RF power and sUAS RCS. A separate, smaller class of RF detection systems can be used for close-range objectives.

Because detection of sUAS threats occurs at shorter ranges, higher-frequency radars are preferred for this task. Ku- and Ka-bands (12–18 GHz and 26.5–40 GHz, respectively) are ideal. This is a difference from most military-grade, fire control radars, which operate in the X-band (8–12 GHz) [1]. Experiments are conducted also in millimeter-wave frequencies and even in terahertz frequencies to enable detection of very small objects with low RCS signatures. Further radio frequency (RF) processing using linear frequency modulation techniques, chip pulse Doppler, or ubiquitous frequency-modulated continuous waves could enable detection of very low RCS objects and high-frequency rotary movement in high-clutter conditions.

A number of theoretical studies have been carried out to evaluate RF performance in terms of target detection probability. RCS of an sUAS, such as DJI-Phantom 4, was estimated to be ~0.02 m2. Radar modeling assuming AESA-based, 3-D radar operating at Ka-band with 10-W output power predicts detection at a range up to 2 km, with reasonable false alarm probability. Tracking algorithms can extract the drone plots from noise, so sUAS detection and track could be accomplished.

The phenomenology of sUAS poses significant challenges to radar. Because RCS of target sUAS may vary significantly, multiband radars may be necessary. Further, the shorter bands used to detect sUAS are more susceptible to interference from weather [1].

Frequently, radar must operate in staring mode, where it can survey the entire area to be protected. Its ability to track multiple objects at once using electronic beamsteering is key to holding multiple, possible UAS in memory, prioritizing them, and cueing EO/infrared (IR) assets to classify the objects in question.

Radio Frequency

RF detection of sUAS is based on locating the direction of the signals from communication with the UAV or interrogate it to identify its type. Once this is known, an RF system can double as a kill solution, as it can also be used to take control of the sUAS and land or disable it using further electronic warfare techniques. The key RF system characteristics for successful detection are power sensitivity, directional accuracy, and bands covered. RF detection is also a key alternative to radar in settings where radar transmissions and returns may be obscured.

Both active and passive approaches to RF detection have been demonstrated [2]. Active RF detection is possible by emitting a Wi-Fi signal and measuring its returns. However, we will focus on passive sensing because it is generally better for military application where possible. Most drones communicate with their controller ~30 times per second, giving ample opportunities to be sensed. They also have distinct signatures that allow them to be separated from the clutter of other wireless signals. This is key for operating in urban environments [2].

While both military and commercial UAS attempt to use secure communications, these are still susceptible to attack that exposes their base signatures. Frequency hopping spread spectrum (FHSS) is a technique where carrier frequency is changed to prevent jamming or sniffing. Korean experiments in academia have shown it to be vulnerable when attacked using software-defined radio [3]. This experiment was specifically performed against an sUAS and its controller. Once the activeness of the channel used is detected (there is a widely established field of techniques for doing this), the period in the hopping sequence is extracted by looking for repetitions from the sequence. Using pattern-matching algorithms can help. Baseband extraction can then provide further information for signal analysis [3].

Acoustic

Acoustic detection is another viable means for detecting possible sUAS. These can operate using single microphones or arrays. In combination, microphone arrays can triangulate a target’s location and velocity and track it. Beamforming algorithms are a common method for detecting and tracking targets in this case. They can be augmented with additional processing techniques, such as a Kalman filter [4]. The U.S. Army Research Laboratory has demonstrated that while a UAS as small as Class 1 can be detected and tracked with a portable, inexpensive microphone, acoustic signals are easily interfered with by noise from other sources (e.g., aircraft) [4].

CLASSIFICATION

EO/IR and Spectral

Once a possible sUAS is detected, a sensor must be cued that can hone in on the detected track and verify what it is. EO/IR imaging systems discriminate sUAS based on their shape. While they can serve as detection systems, they are most adept at classification. Full, 360° monitoring, with sufficient resolution to detect a UAS using cameras, would be prohibitively expensive. The key performance parameters for an EO/ IR sensing system in this capacity are range and resolution. Because the UAS is extremely small and must be examined at sufficient range so that a kill system can be engaged in time to eliminate the threat before it approaches, imagers used for this purpose must have good optical magnification and fast frame rates. The magnification of an optical assembly is determined mostly by its “f” number, which is calculated by dividing its focal length by the diameter of its aperture. This enables the camera to see the target UAS from far away by having a long optical system and a smaller opening, creating a narrow FOV. A good FOV for a dedicated C-UAS camera would be ~20°.

With magnification achieved, the imager’s sensor core must provide high enough spatial resolution that the small object can be suitably distinguished in the image for classification. The number of pixels in the camera’s focal plane array (FPA), which is its core photoreactive component, is the most important physical element in providing high resolution. Small FPAs with more pixels (but where each pixel is smaller in size) are worth additional cost when the size of the system needs to be small. Because of the short timeline available to defeat the threat, automated classification based upon imagery is optimal.

The most effective imaging systems for most classification tasks employ multiple spectral bands. Visible, near-IR, and short-wave IR cameras have distinct advantages in achieving the large number of pixels required for spatial resolution because the materials used to create their FPAs are simpler. This makes their sensors lower cost and more reliable. They also do not require the extensive cooling as long-wave IR (LWIR) sensors, giving them a size advantage.

However, mid-wave IR and LWIR provide additional advantages for nighttime operation and seeing through obscurants like smoke, dust, and fog. A key characteristic in determining the sensitivity of these sensors is their noise equivalent temperature difference. A typical sensitivity suitable for the C-UAS classification mission is 40 mK. Their intricate materials make them more costly and typically lead to more dead pixels on their FPA. They also require some design study to choose a cooled vs. an uncooled sensor (the former is larger and more expensive). Nevertheless, the added functionality they bring in dealing with any sort of environmental degradation makes them worthwhile.

Radar

Specific radar techniques can also contribute to classification using analysis based on micro-Doppler signatures. Micro-Doppler analysis is capable of detecting high-frequency, moving components within an object, such as rotor or propeller blades of the target UAS. sUAS present additional challenges to micro-Doppler analysis because of their low mass and small inertia. Wind impacts their flight significantly, which, coupled with their active stabilization measures, creates highly variable trajectories. This is problematic because high-Doppler frequency resolution measurement requires extended, coherent data.

PROCESSING

Because of the short timeline available to defeat the sUAS, at-sensor processing is critical. A human must not be in the loop between the radar or RF detection system and a camera, for example. This opens up several architecture trades that vary greatly, depending on whether the C-UAS solution must function for a small group of soldiers operating where they might not have access to higher-order, command and control (C2) assets.

In the case of an all-in-one solution where a vehicle is travelling with a small group or a ground-based portable system, the detection system must be able to process its information and cue the EO/IR sensor for classification. The EO/IR system, in turn, must determine reliably that the object in question is an sUAS in order to engage a kill solution. This requires advanced, small-format processors, such as the NVIDIA Jetson line, which can be small and power efficient but run advanced algorithms quickly.

Radar-tracking, micro-Doppler analysis, image-segmentation, and material-identification algorithms are extremely complex, power- and processing-hungry processes that must factor into any C-UAS system design and go hand-in-hand with the choice of the sensors themselves. Even in the case of a C-UAS asset intended to function with access to higher C2 systems, at-sensor processing is critical because of the short defeat timeline against a threat sUAS. A vast amount of data cannot be related to the C2 system, processed for detection or classification, and returned to the C-UAS system for prosecution. Linking to the C2 system, in this case, gives situational awareness of the drone threat but does not actually contribute to detecting, classifying, or defeating the target.

DEFEAT

Once the sUAS has been detected and classified, a defeat mechanism must be engaged. There are a number of means being used and evaluated for this task. Any of the following might be cued once a spectral system has made a classification.

RF-based drone takeover is ideal when an sUAS must be defeated in the presence of people or high-value infrastructure. In this case, electronic techniques are used to control a drone and either force it to land at a given safe zone or, by jamming communication to its controller, make it return to its point of origin on its own. D-FEND is a current industry solution offering detection and location capabilities that take over an sUAS and land it in a prechosen, safe zone [5].

Another technique is to leverage a purpose-built drone to engage in an intercept collision path. The interceptor is itself an sUAS; some work by colliding with their target, such as Anduril’s new interceptor [6]. This interceptor is heavy and designed to survive the collision. Others, like the Skylord Hunter, use a C-UAS net payload to disable their targets [7].

Kinetic destruction uses appropriately-sized munitions aimed at the sUAS to defeat it. Proximity triggers may help destroy sUAS. Ground-based nets and latex cloud deployments can also be launched.

Directed energy is an additional means to defeat oncoming UAS. It has recently been the focus of extensive military interest. Raytheon delivered their first High-Energy Laser Weapon System (HELWS) to the U.S. Air Force in 2019 for year-long field evaluation [8] (Figure 3). This system, which leverages a multispectral system for targeting, is a significant step forward in directed energy application; its results will help define future efforts.

Figure 3: Raytheon’s HELWS (Source: U.S. Air Force).

THE SWARM sUAS THREAT

We have so far focused on a single UAS. This applies to security and counterinsurgency contexts. The far more difficult problem, but one that must be solved if we are to compete against near-peer adversaries in the future battlespace, is defeating swarms of UAS. Already the subject of offensive research in the Defense Advanced Research Projects Agency Offensive Swarm Enabled Tactics program [9], Russia declared in 2019 its intent to create “Flock-93,” an operational concept where warhead-equipped drones numbering upwards of a hundred are equipped with explosive payloads to attack convoys [10].

Against swarms, at-sensor processing is even more essential. Communicating data and video streams back to a C2 post for centralized processing and coordination would jam communication channels and create a single point of failure. Swarm offenses could easily overwhelm a centralized processing architecture as part of the C2 capability. Fortunately, industry advances can meet this challenge. Compact, low-cost processors have evolved into highperformance, embedded computing solutions. Each sensor can dedicate processing for tracking or video, with specific function to distill information into target attributes and significantly reducing information bandwidth back to the C2 center.

With specific target attributes, the C2 processor is responsible for collecting all measurements from individual sensors into track vectors, with classification, prioritization, and a filter for false detects from clutter. Candidate threats working through the processing filter achieve a threat classification and assigned target identifications, tracked with realistic motion gating, and further locked in to maintain observation and tracking.

CONCLUSIONS

The engagement with UAS detection,classification, and mitigation is a current problem that will continue to advance in both the threat and antithreat missions in the coming years. UAS continue to increase in use and decrease in cost. Current research shows individual success capabilities, but it is in combining the systems where a greater impact is likely by leveraging the benefits of each system. On-sensor processing and automated algorithms will continue to be very important.

ACKNOWLEDGMENTS

The author thanks Chris Sheppard, Wes Procino, and Abraham Isser (all QinetiQ, Inc.) for their technical assistance.

REFERENCES

- Wilson, B., S. Tierney, B. Toland, R. M. Burns, C. P. Steiner, C. S. Adams, M. Nixon, M. D. Ziegler, J. Osburg, and I. Chang. “Small Unmanned Aerial System Adversary Capabilities.” Homeland Security Operational Analysis Center, 2020.

- Nguyen, P., M. Ravindranathan, R. Han, and T. Vu. “Investigating Cost-Effective RF-based Detection of Drones.” DroNet ‘16: Proceedings of the 2nd Workshop on Micro Aerial Vehicle Networks, Systems, and Applications for Civilian Use, pp. 17–22, June 2016.

- Shin, H., K. Choi, Y. Park, J. Choi, and Y. Kim. “Security Analysis of FHSS-type Drone Controller.” In: Information Security Applications, Springer: Lecture Notes in Computer Science, vol. 9503, 2015.

- Benyamin, M., and G. H. Goldman. “Acoustic Detection and Tracking of a Class I UAS With a Small Tetrahedral Microphone Array.” ARL-TR-7086, U.S. Army Research Laboratory, pp. 16–17, September 2014.

- “D-Fend Solutions’ EnforceAir selected by the U.S. DIU during ‘Counter Drone 2’.” Military Press Releases, https://www. militarypressreleases.com/2020/02/04/d-fend-solutions-enforceair-selected-by-the-u-s-diu-during-counter-drone-2/, accessed 23 April 2020.

- Ward, J., and C. Sottile. “Inside Anduril, the Startup That Is Building AI-powered Military Technology.” NBC News, https://www.nbcnews.com/tech/security/inside-anduril-startup-building-ai-powered-military-technology-n1061771, 3 October 2019.

- Skylord Hunter Short. https://vimeo.com/390202512/ 67b3c4d5cc, accessed 15 May 2020.

- Keller, J. “Raytheon Delivers First Laser Counter-UAV System.” Military and Aerospace Electronics, https://www. militaryaerospace.com/power/article/14069489/ laser-weapon-counteruav-directedenergy, accessed 23 April 2020.

- Defense Advanced Research Projects Agency (DARPA). “OFFensive Swarm-Enabled Tactics (OFFSET).” https://www. darpa.mil/work-with-us/offensive-swarm-enabled-tactics, accessed 23 April 2020.

- Atherton, K. D. “Flock 93 Is Russia’s Dream of a 100-Strong Drone Swarm for War.” C4ISR News, https://www.c4isrnet. com/unmanned/2019/11/05/flock-93- is-russias-dream-of-a-100-strong-drone-swarm-for-war/, accessed 23 April 2020.